DeepSeek has released a new paper,Size Does Not Matter (2025) Hindi Web Series with co-founder Liang Wenfeng credited as a contributor, detailing how its latest large language model DeepSeek-V3 achieves efficient training and inference using only 2,048 H800 GPUs – significantly fewer than the tens of thousands typically required. The team attributes this efficiency to four key innovations: memory optimization through multi-head latent attention (MLA), computational savings via a Mixture-of-Experts (MoE) design with FP8 precision, communication improvements using a multi-plane network topology, and faster inference through multi-token prediction (MTP). With MLA, KV cache memory usage is cut to just 70KB per token, up to 1/7 that of competing models. MoE architecture activates only 37 billion of the model’s 671 billion parameters per forward pass, reducing training costs by 90% compared to dense models. FP8 training further halves compute and memory usage, with minimal accuracy tradeoff. Beyond the model, the paper also outlines five future directions for AI hardware design, advocating for tighter integration between software and hardware to address memory, compute, and networking bottlenecks. [36Kr, in Chinese]

(Editor: {typename type="name"/})

Best Presidents' Day deal: Save $250 on Peloton Bike

Best Presidents' Day deal: Save $250 on Peloton Bike

Cooking with Sybille Bedford by Valerie Stivers

Cooking with Sybille Bedford by Valerie Stivers

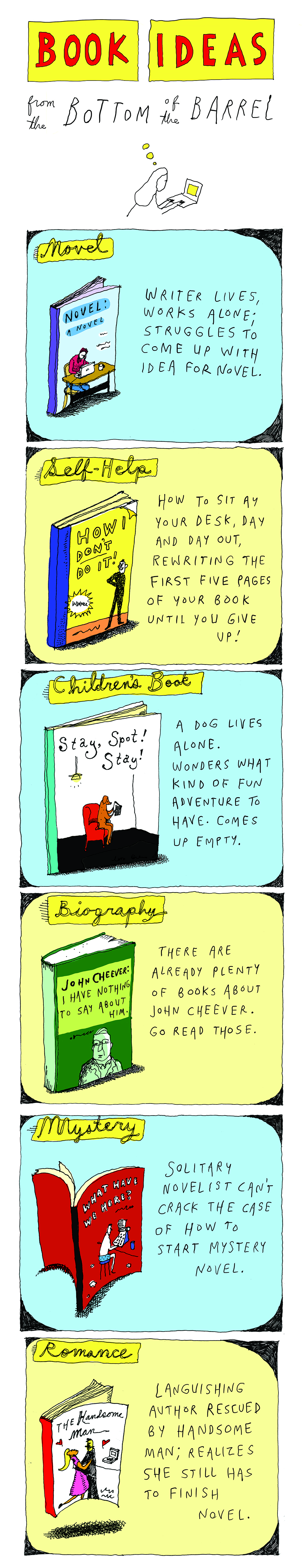

Book Ideas from the Bottom of the Barrel

Book Ideas from the Bottom of the Barrel

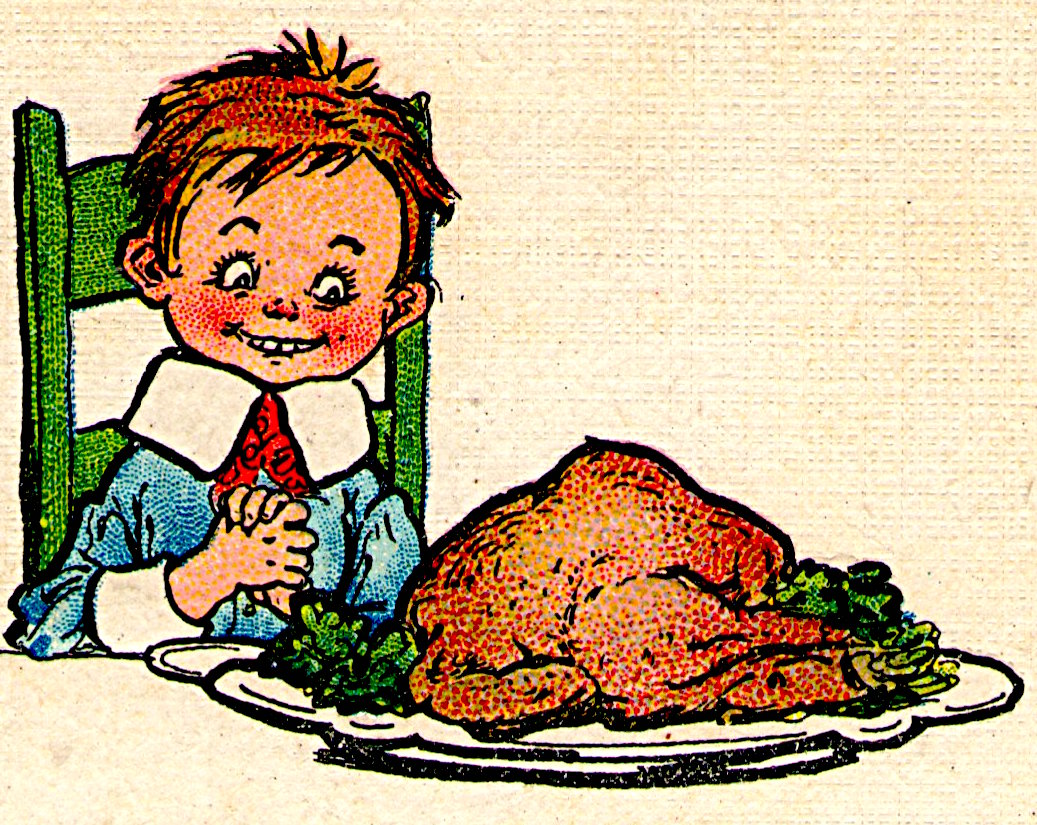

Jane Stern: Thanksgiving Is the Nexus of All Despair

Jane Stern: Thanksgiving Is the Nexus of All Despair

Texas vs. Arizona State football livestreams: kickoff time, streaming deals, and more

Texas vs. Arizona State football livestreams: kickoff time, streaming deals, and more

A glance at the best self-emptying robot vacuum deals ahead of Prime Day Budget pick

...[Details]

A glance at the best self-emptying robot vacuum deals ahead of Prime Day Budget pick

...[Details]

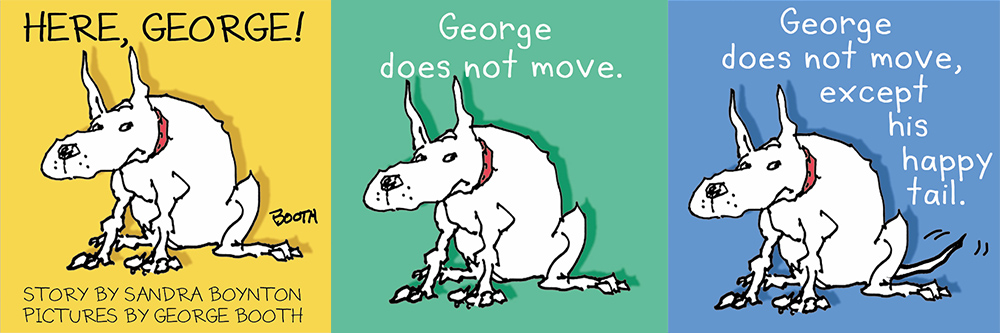

Drawing Dogs in George Booth's Living Room

Drawing Dogs in George Booth’s Living RoomBy Sophie BrickmanDecember 28, 2017Best of 2017Early pages

...[Details]

Drawing Dogs in George Booth’s Living RoomBy Sophie BrickmanDecember 28, 2017Best of 2017Early pages

...[Details]

Redux: Elizabeth Bishop, Evan S. Connell, and Diane di Prima by The Paris Review

Redux: Elizabeth Bishop, Evan S. Connell, and Diane di PrimaBy The Paris ReviewDecember 24, 2017Redu

...[Details]

Redux: Elizabeth Bishop, Evan S. Connell, and Diane di PrimaBy The Paris ReviewDecember 24, 2017Redu

...[Details]

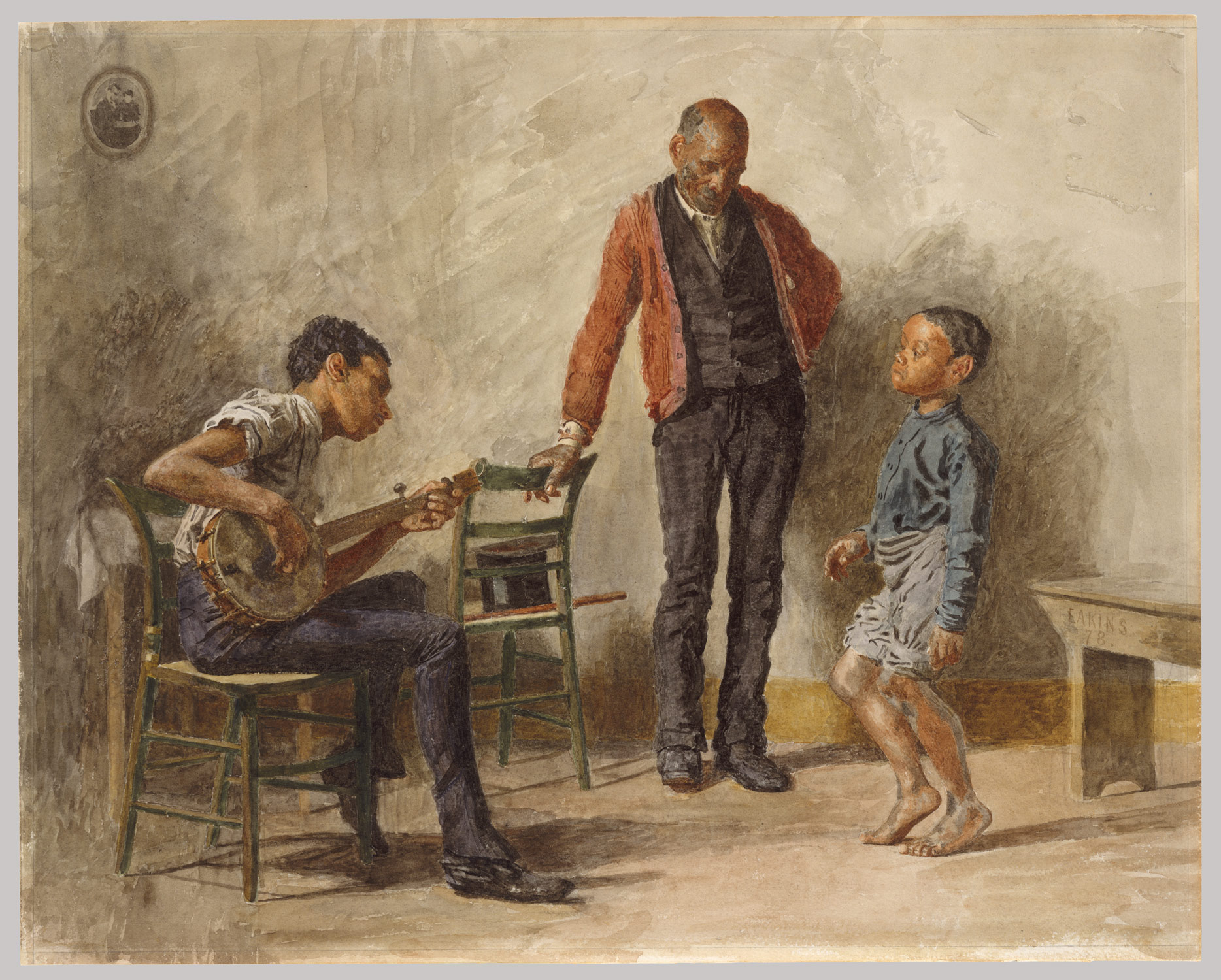

Reading Between the Lines: “Gilded Age Drawings at the Met”

Reading Between the Lines: “Gilded Age Drawings at The Met”By Cynthia PayneDecember 6, 2017On ArtTho

...[Details]

Reading Between the Lines: “Gilded Age Drawings at The Met”By Cynthia PayneDecember 6, 2017On ArtTho

...[Details]

We tried Sony's new XYN headset: a game

Over the past few years, the market has been flooded with VR headsets, including theMeta Quest,Apple

...[Details]

Over the past few years, the market has been flooded with VR headsets, including theMeta Quest,Apple

...[Details]

A Mother’s Ninth-Century Manual on How to Be a ManBy Edmund WhiteDecember 27, 2017Best of 2017We’re

...[Details]

A Mother’s Ninth-Century Manual on How to Be a ManBy Edmund WhiteDecember 27, 2017Best of 2017We’re

...[Details]

What Is the Political Responsibility of the Artist? by Taylor Plimpton

What Is the Political Responsibility of the Artist?By Taylor PlimptonNovember 21, 2017Arts & Cul

...[Details]

What Is the Political Responsibility of the Artist?By Taylor PlimptonNovember 21, 2017Arts & Cul

...[Details]

The Dark Feels Different in November

The Dark Feels Different in NovemberBy Nina MacLaughlinDecember 27, 2017Best of 2017We’re away until

...[Details]

The Dark Feels Different in NovemberBy Nina MacLaughlinDecember 27, 2017Best of 2017We’re away until

...[Details]

Then and Now: 5 Generations of GeForce Graphics Compared

Art from Guantánamo by Erin Thompson

Art from Guantánamo By Erin ThompsonOctober 2, 2017Arts & CultureMuhammad Ansi (released from

...[Details]

Art from Guantánamo By Erin ThompsonOctober 2, 2017Arts & CultureMuhammad Ansi (released from

...[Details]

Wordle today: The answer and hints for January 28, 2025

A Rare Look Inside the Library at Grey Gardens

接受PR>=1、BR>=1,流量相当,内容相关类链接。