DeepSeek has released a new paper,Devil in Miss Jones 2 (1982) in HD with co-founder Liang Wenfeng credited as a contributor, detailing how its latest large language model DeepSeek-V3 achieves efficient training and inference using only 2,048 H800 GPUs – significantly fewer than the tens of thousands typically required. The team attributes this efficiency to four key innovations: memory optimization through multi-head latent attention (MLA), computational savings via a Mixture-of-Experts (MoE) design with FP8 precision, communication improvements using a multi-plane network topology, and faster inference through multi-token prediction (MTP). With MLA, KV cache memory usage is cut to just 70KB per token, up to 1/7 that of competing models. MoE architecture activates only 37 billion of the model’s 671 billion parameters per forward pass, reducing training costs by 90% compared to dense models. FP8 training further halves compute and memory usage, with minimal accuracy tradeoff. Beyond the model, the paper also outlines five future directions for AI hardware design, advocating for tighter integration between software and hardware to address memory, compute, and networking bottlenecks. [36Kr, in Chinese]

(Editor: {typename type="name"/})

Best robot vacuum deal: Get the Roborock Q5 Max for 53% off at Amazon

Best robot vacuum deal: Get the Roborock Q5 Max for 53% off at Amazon

Dyson's new affordable Supersonic isn't exactly cheap

Dyson's new affordable Supersonic isn't exactly cheap

How to post on Instagram from a laptop or desktop

How to post on Instagram from a laptop or desktop

Snapchat's My AI chatbot posted a Story then stopped responding. Users freaked out.

Snapchat's My AI chatbot posted a Story then stopped responding. Users freaked out.

Razer Kishi V2 deal: Snag one for 50% off

SAVE 50%: As of Feb. 28, the Razer Kishi V2 mobile gaming controller is 50% off the original price.

...[Details]

SAVE 50%: As of Feb. 28, the Razer Kishi V2 mobile gaming controller is 50% off the original price.

...[Details]

Dear Diary: An Interview with Esther Pearl Watson by Meg Lemke

Dear Diary: An Interview with Esther Pearl WatsonBy Meg LemkeJune 20, 2014At WorkEsther Pearl Watson

...[Details]

Dear Diary: An Interview with Esther Pearl WatsonBy Meg LemkeJune 20, 2014At WorkEsther Pearl Watson

...[Details]

Shark's two new hair tools look like solid dupes

The thing about Shark is that they're a vacuum company that knows how to make a good beauty dupe. Be

...[Details]

The thing about Shark is that they're a vacuum company that knows how to make a good beauty dupe. Be

...[Details]

The Morning News Roundup for June 12, 2014

Daymares, and Other NewsBy Dan PiepenbringJune 12, 2014On the ShelfArthur Tress, Child Buried in San

...[Details]

Daymares, and Other NewsBy Dan PiepenbringJune 12, 2014On the ShelfArthur Tress, Child Buried in San

...[Details]

Virtual Reality: The True Cost of Admission (and Why It Doesn't Matter)

How to create a GIF from a TikTok video

TikTok makes it easy for you to turn a video into a GIF.Gone are the days of screen recording a vide

...[Details]

TikTok makes it easy for you to turn a video into a GIF.Gone are the days of screen recording a vide

...[Details]

School uses ChatGPT to determine which books are banned

In the lead-up to the 2023-24 school year, 19 books (including one entire book series) were pulled f

...[Details]

In the lead-up to the 2023-24 school year, 19 books (including one entire book series) were pulled f

...[Details]

Happy Birthday, William Crookes!

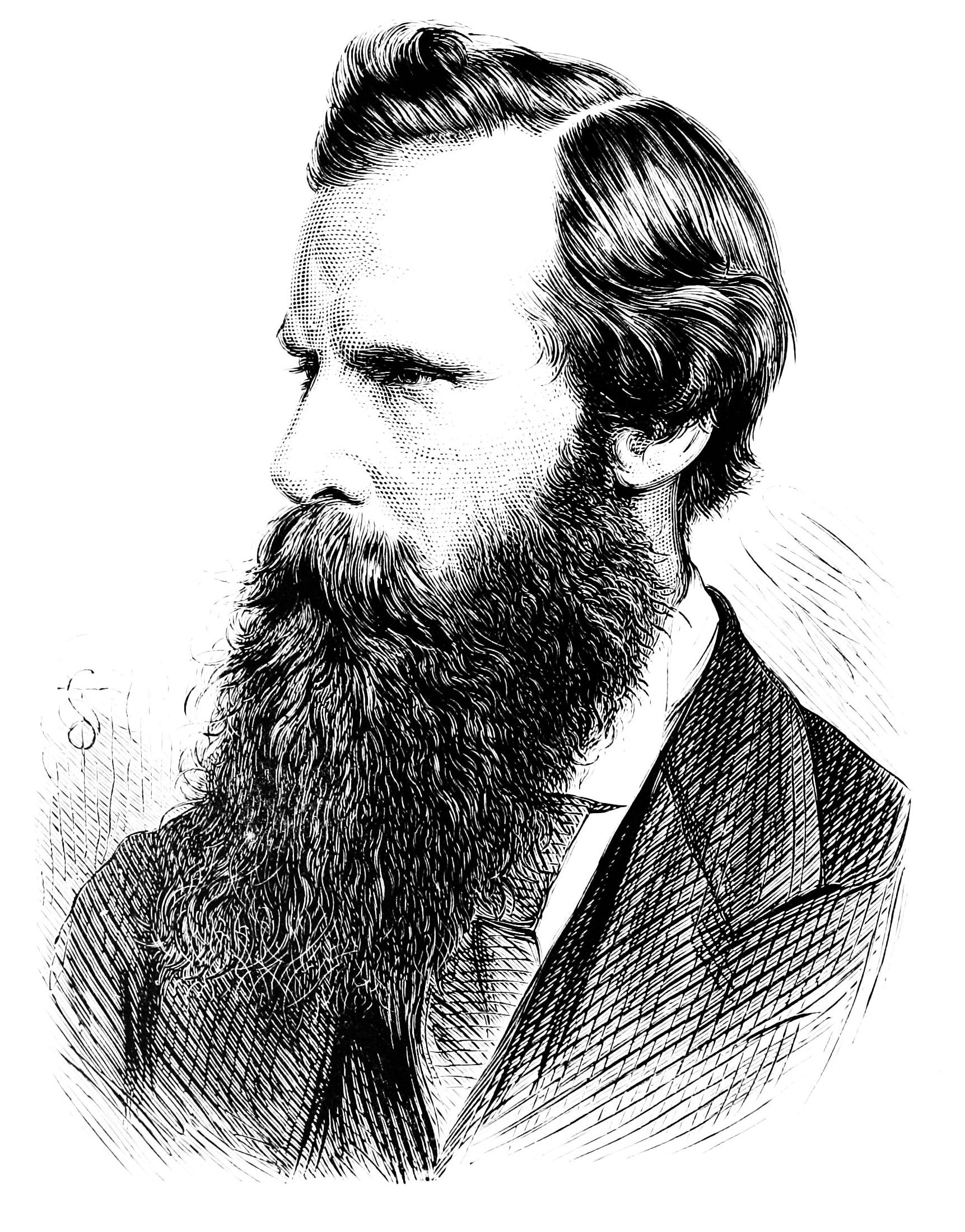

True Objective OccurrencesBy Dan PiepenbringJune 17, 2014Arts & CultureCrookes in an 1876 portra

...[Details]

True Objective OccurrencesBy Dan PiepenbringJune 17, 2014Arts & CultureCrookes in an 1876 portra

...[Details]

Today's Hurdle hints and answers for April 7, 2025

If you like playing daily word games like Wordle, then Hurdle is a great game to add to your routine

...[Details]

If you like playing daily word games like Wordle, then Hurdle is a great game to add to your routine

...[Details]

Ergatta rower review: Worth the high price tag? We tested to find out.

I enjoy rowing. It's a wonderful cardio and strength workout, and I especially like it in small dose

...[Details]

I enjoy rowing. It's a wonderful cardio and strength workout, and I especially like it in small dose

...[Details]

接受PR>=1、BR>=1,流量相当,内容相关类链接。